Nvidia H100 Takes to the Skies: Is Ground-Based Computing Dead? Unveiling the Brutal Engineering of “Space-Based Computing”

Introduction: Ground data centers are devouring global electricity. Is burning coal truly the endgame for AI? As Silicon Valley giants and Chinese tech powerhouses send GPUs into orbit, an arms race for “space-based computing” has ignited. This article dissects the jargon and truths of space computing, revealing the ultimate business that could cool down the Earth.

I. Introduction: Stop Competing on Earth, Computing Needs to “Break Out”

Guys, stop fixating on those ground-based data centers. Today’s AI large models are electricity-guzzling behemoths. Ground data centers not only consume vast amounts of power but also occupy significant land and water resources. Even their cooling systems are pushing rivers to their limits. With computing demands becoming insatiable, Earth can no longer accommodate them. So, the mavericks have decided to send computing power into space. This isn’t a sci-fi movie; it’s an imminent engineering solution. Nvidia’s H100 has already hitched a ride on a rocket. Musk’s Starlink is planning to build data centers in orbit. Google and Chinese players are all eyeing this “extraterrestrial realm.” Today, let’s skip the fluff and delve into the underlying logic of this hardcore business.

II. Debunking Myths: Don’t Mistake Satellites for “Remote Hard Drives”

Many believe space computing simply means moving servers onto satellites. If so, you’re oversimplifying aerospace engineering. Traditional satellites operate on a “sense-on-space, compute-on-ground” model. They capture images and transmit raw data back to Earth. A single high-definition remote sensing image can easily reach dozens of gigabytes. With satellites’ limited bandwidth, transmitting such data can take an eternity. By the time ground processing is complete, a forest fire might have already turned into charcoal. Modern space computing follows a “sense-and-compute-on-space” approach. Data is cleansed and AI models are run directly in orbit. Satellites only send back concise messages like “Fire at coordinates X, send help.” This saves 90% of bandwidth and boosts efficiency a thousandfold. This isn’t about “moving servers”; it’s about “computing power relocation.” It involves edge computing on satellites hurtling through space at 20,000 kilometers per hour.

III. Layman’s Terms: Why Bother Going to Space?

Some ask: “Isn’t building data centers on Earth sufficient?” Buddy, have you seen industrial electricity bills? Computing in space offers three unbeatable advantages over ground-based alternatives:

- Abundant Solar Power, Free of Charge: In space, there’s no atmospheric interference or day-night cycles. Once solar panels are deployed, they provide 24/7 uninterrupted charging. Power generation efficiency is five times that on Earth, eliminating carbon concerns entirely.

- Natural Ultra-Low-Temperature Cooling: The background temperature in space is -270°C. With proper heat conduction design, data centers don’t need liquid cooling. This saves 40% of the cooling energy consumption compared to ground-based facilities.

- Global Coverage, No Blind Spots: Satellites can reach areas where ground optical cables can’t. Whether it’s ships on the high seas or polar expeditions, computing power remains at full capacity. This global reach leaves ground-based stations envious.

IV. Engineering Jargon: The “Three Majestic Mountains” of Space Computing

Don’t be fooled by the hype; the engineering challenges are immense. Engineers have burned through countless cigarettes trying to tackle these three issues:

- Radiation Hardening: High-energy particles in space can wreak havoc on chips, causing “brain clots.” Ordinary commercial chips would become useless within three days in space. Radiation-resistant designs or architectural redundancies are essential.

- Link Budget: Inter-satellite laser communication is standard, starting at 100Gbps. Aligning laser heads between two satellites hurtling towards each other at high speeds is as difficult as threading a needle on a moving high-speed train.

- Power Management: This is where veterans like Imax Power shine. The extreme space environment demands exceptionally high battery cycle life. Any misstep in charge-discharge management turns satellites into space debris. Efficient and stable power modules are the heartbeat of computing power in space.

V. Global Landscape: A Duel Between China and the US

Currently, only China and the US are in the top tier of this race.

United States: Follows a “giants + mavericks” approach. Musk’s Starlink V3 aims to deploy 100GW annually. StarCloud directly stuffs Nvidia H100s into microsatellites. Google is pursuing the “Sun Catcher Project” with TPUs, envisioning computing power as ubiquitous as air.

China: Focuses on “engineering implementation + full-stack networking.” Guoxing Aerospace’s “Three-Body Computing Constellation” leads the charge. With a single-satellite computing power of 744TOPS, it’s no empty claim. Last year, it ran a traffic model in Guangzhou, delivering results in just three minutes. This response speed enables commercial viability. Zhejiang Lab is also ramping up efforts, planning to reach 1,000 satellites by 2030. We’re not about hype; we’re about solving real-world problems.

VI. Industry Chain Overview: Who’s Quietly Making a Fortune?

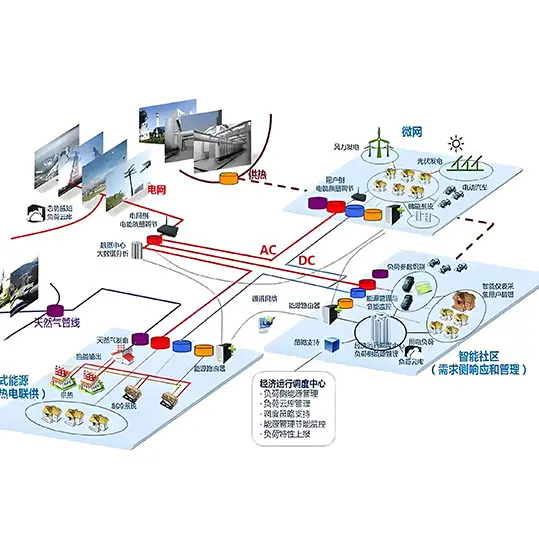

Space computing isn’t a one-company show. It’s an incredibly lengthy and hardcore chain:

- Upstream: Rocket and satellite manufacturers. Without cost-effective launches, computing power remains a pipe dream. Domestic leaders like China Satellite and Shanghai Hugong lead the way.

- Midstream: Chip and communication providers. Fudan Microelectronics and Unisplendour Purple Light Microelectronics craft the “hearts” of the system. Accelink Technologies handles laser communication, ensuring smooth “blood flow.”

- Downstream: Computing power scheduling and operation. Putian Technology and Kaipu Cloud manage integrated space-ground scheduling. They sell computing power from space to industries in need.

VII. An Engineer’s Drunk Confession: What Does the Future Hold?

Honestly, space computing is still in its infancy. Costs remain high, and radiation-resistant technology has a long road ahead. But the trend is irreversible. When ground-based energy is drained by AI, space becomes the only growth avenue. Future computing hubs might not be in Guizhou’s mountains but in the vast expanse of space above us. Whether it’s safeguarding satellite power or breaking through core architectural limits, we engineers must roll up our sleeves and get our hands dirty. Imax Power will keep a close eye on high-reliability power demands. After all, without stable energy, even the most powerful GPUs are useless scrap.

Computing power “going overseas” is outdated. Computing power “going cosmic” is the true frontier. Fellow engineers, are you ready to take flight?